People Are Likely Wary Because Artificial Intelligence (Ai) Voice Technology Offers Fewer Opportunities for Monitoring and Control Than Current AI Tools. The Majority of People (81%) Also Want Conversational AI to Declare Itself as Non-Human, Indicating People’s Discomfort With the Increasingly Blurred Lines Between AI and Human Voices

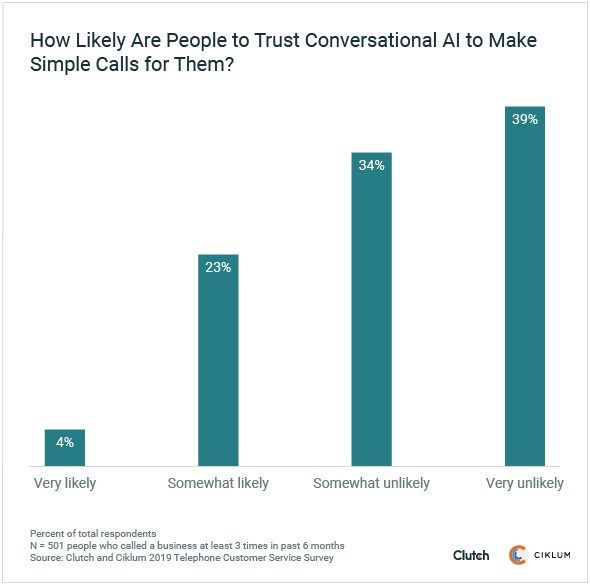

Nearly three-quarters of people (73%) say they are somewhat or very unlikely to trust a tool such as Google Duplex to correctly make simple calls for them. Duplex is a tool within Google Assistant that can call restaurants and book reservations using an AI-powered voice. Duplex caused controversy when it was unveiled in spring 2018 due to its lifelike realism.

Graph – How likely are people to allow conversational AI to make simple calls for them?

This data comes from Clutch, the leading B2B ratings and reviews firm, and Ciklum, a global digital solutions company. Clutch and Ciklum surveyed 501 people who called businesses at least three times in the past six months to understand their comfort level with conversational AI tools.

Experts mentioned that people often feel hesitant toward new technologies that later become accepted, such as ATMs or self-checkout lines.

“To varying degrees, we’re all vulnerable to different forms of this anxiety when it comes to adjusting to new ideas or technological advances,” said Ivan Kotiuchyi, research engineer at Ciklum.

AI voice technology presents new obstacles for winning consumer confidence due to its lack of monitoring, though.

“On the computer, you have a user interface,” said Daniel Shapiro, chief technology officer and co-founder of Lemay.ai, an enterprise AI consulting firm. “… You can see what it’s doing. Over the phone, you really have no idea what it’s capable of doing. You have to just believe.”

Marketing Technology News: Sauce Labs Named Gold Stevie Award Winner for Best Software Development Solution in 2019 American Business Awards

Yet, experts say that as a tool like Duplex becomes more commonplace and demonstrates its success, people will grow to trust it.

Conversational AI Opens New Security Risks

More than 8 in 10 people (81%) want AI voice technology such as Duplex to declare itself as a robot before proceeding with a call.

This desire for identification shows that people may be wary of the ways in which AI voices can be used for manipulation.

Criminals could use conversational AI to automate increasingly realistic scam calls.

Marketing Technology News: Spectrum Equity Announces Sale of Ethoca to Mastercard

“Imagine a call that comes from your boss,” WatchGuard Technologies Chief Technology Officer Corey Nachreiner said in GeekWire. “It sounds like your boss, talks with his or her cadence but is actually an AI assistant using a voice imitation algorithm. Such a call offers limitless malicious potential to bad actors.”

Overall, the survey indicates that people are presently uncomfortable with conversational AI but will likely embrace it as it grows in popularity. People should be cautious of scams that exploit the technology behind Google Duplex for malicious purposes, though.

Marketing Technology News: revital U International Unveils New Sales App with VERB’s Interactive Video Features