![]() Amsterdam, Netherlands – August 7, 2019 – AI-powered software company, Sightcorp has managed to creatively iterate and improve the detection aspect of facial analysis and recognition software, thanks to their unique focus on Deep Learning, rather than the classical Haar Cascade detector methodology. This comes on the heels of an effort on the part of Sightcorp to enhance the effectiveness of their AI-powered software for users looking to gain an even deeper insight into moment-to-moment interaction.

Amsterdam, Netherlands – August 7, 2019 – AI-powered software company, Sightcorp has managed to creatively iterate and improve the detection aspect of facial analysis and recognition software, thanks to their unique focus on Deep Learning, rather than the classical Haar Cascade detector methodology. This comes on the heels of an effort on the part of Sightcorp to enhance the effectiveness of their AI-powered software for users looking to gain an even deeper insight into moment-to-moment interaction.

From capturing and quantifying emotions and moods to analyzing information on demographics and providing actionable and reliable data on customers’ attention spans, Sightcorp intends to give users as much insight as necessary to make an informed, predictive decision. The initiative focused on deepening the software’s ability to detect faces across varying head poses, with greater accuracy, speed, and granularity. For Sightcorp, it wasn’t just their reputation at stake, but a greater accomplishment of the aim of using an AI-powered face detection solution across a wide variety of applications.

The fact is that not all faces and behaviors are alike. Nor can it be expected that all faces will be completely visible at all times. In some eastern countries, for example, the wearing of face masks makes it difficult to detect the presence of a face. Add to this the conundrum of accuracy across the different image and video resolutions, distances, light conditions, camera angles, and head poses, and you have a legitimate issue.

It’s an “issue” that Sightcorp decided to address head-on, prior to it ever becoming a major problem, by introducing a new methodology. To begin, the Sightcorp team decided to tackle the measurement of the performance of face detection across variations in head poses.

It’s an “issue” that Sightcorp decided to address head-on, prior to it ever becoming a major problem, by introducing a new methodology. To begin, the Sightcorp team decided to tackle the measurement of the performance of face detection across variations in head poses.

Since most modern datasets already include annotations with some indication on the head pose, Sightcorp had a baseline from which to work. Using sets such as the WIDER FACE dataset, which included tags for “typical pose” or “atypical pose”, and the VGGFace dataset, which divided poses into “front”, “three-quarter,” and “profile”, Sightcorp gained a clear view forward: Their own solution would need to forge ahead by quantifying detection performance across granular variation in yaw, pitch, and roll.

Read more: Facial Detection Technology: Why No Activation Is Complete Without It

This new focus would give the team an added advantage: testing for when things were not working. If there were certain values for which yaw, pitch, and roll didn’t work, they could take note. They could also measure the cut-off for yaw, pitch, and roll, beyond which they would need to improve the robustness of the detection process. So they rolled up their sleeves and got to work. The team used the Head Pose Image Database from the Prima Project at INRIA Rhone-Alpes, a “head pose database – a benchmark of 2790 monocular face images of 15 persons with variations of pan and tilt angles from -90 to +90 degrees. For every person, 2 series of 93 images (93 different poses) are available.”

Harnessing the handy power of Python, the team engineered a small script to create a ground truth CSV file with a path to each file in this dataset and its corresponding pitch and yaw values. The Head Pose dataset was integral and absolutely valuable to the success of this initiative because it encodes yaw and pitch into filenames.

Harnessing the handy power of Python, the team engineered a small script to create a ground truth CSV file with a path to each file in this dataset and its corresponding pitch and yaw values. The Head Pose dataset was integral and absolutely valuable to the success of this initiative because it encodes yaw and pitch into filenames.

Next, for each value of yaw and pitch, there were at least 30 images. That’s how granular the data truly got. But it’s not simply about accounting for every variation. It’s about creating a benchmark that then allows the AI aspect of the software to take over, running its own “learning” process to the set of images, going beyond what’s merely scripted in code. In case of these images, the face detector should have been able to detect 30 faces for every combination of yaw and pitch. Once the benchmarking script read the file, it ran the face detector on each image under the path column and returned a concrete number of faces detected.

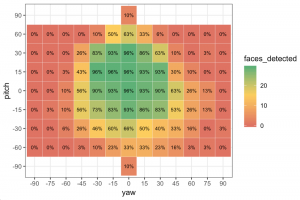

Now, if exactly one face was detected, the output would also include the face rectangle (x, y, width, height). And, in order to concretely visualize the accuracy of their own, newly-engineered solution, versus the “classical” Haar Cascade method, the team reformatted the data through a heat map. The results were stunning.

Through the heat map, it’s clear to see that over 30% of the squares in a 10×10 grid returned a result without any detection (0% faces detected) when using the Haar Cascade method. Clearly, then, the Haar Cascade detector worked reasonably well, as expected, for frontal and slightly sideways faces. The issue turned out to be markers for extreme head poses. Here, the measurement performance “rapidly” declined.

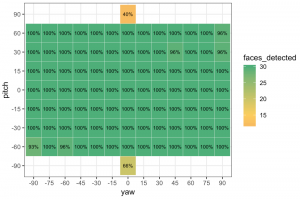

Results for Sightcorp’s own solution, the newly minted “Deep Learning face detector,” on the other hand, returned a 10×10 grid of nearly a full set of 100% face detection. It’s a stark differentiation that gave Sightcorp a significant advantage in the calibration of “extreme” head poses — yet another feather in its already full hat of a solution.

Results for Sightcorp’s own solution, the newly minted “Deep Learning face detector,” on the other hand, returned a 10×10 grid of nearly a full set of 100% face detection. It’s a stark differentiation that gave Sightcorp a significant advantage in the calibration of “extreme” head poses — yet another feather in its already full hat of a solution.

Says the team, “These visualizations give us actionable insights about the kind of head poses where our face detector can do even better.” For Sightcorp, it’s one small step. For its users, it’s one giant leap — the platform is already being used for retail and digital signage, emotion recognition, attention time, and multiple-person tracking. With this Deep Learning face detection measurement, Sightcorp now has the ability to ensure that head positions and gaze are not only captured but also translated into further usable data.

Says the team, “These visualizations give us actionable insights about the kind of head poses where our face detector can do even better.” For Sightcorp, it’s one small step. For its users, it’s one giant leap — the platform is already being used for retail and digital signage, emotion recognition, attention time, and multiple-person tracking. With this Deep Learning face detection measurement, Sightcorp now has the ability to ensure that head positions and gaze are not only captured but also translated into further usable data.

In fact, Sightcorp CEO Joyce Caradonna says, “I am very proud of what the team has achieved with this face detection model. This improvement doesn’t only enhance the software’s core promise, it makes a shift in the way datasets are being used, giving the next generation of comparable analytics platforms that are sure to emerge, a new baseline from which to innovate.”

Read more: Is Facial Recognition the New Business Card?

Comments are closed.